Diagnose Gradient Entanglement

EAGC starts from an optimization view of GCD and identifies two coupled failure modes: gradient direction deviation for labeled samples and subspace overlap between known and novel classes.

CVPR 2026

EAGC is a plug-and-play gradient-level module for generalized category discovery that explicitly mitigates gradient entanglement and consistently improves existing GCD frameworks.

EAGC diagnoses gradient entanglement from both gradient-direction and feature-subspace perspectives, then addresses it with anchor-based alignment and energy-aware projection.

Generalized Category Discovery (GCD) leverages labeled data to categorize unlabeled samples from known or unknown classes. Most previous methods jointly optimize supervised and unsupervised objectives and achieve promising results. However, inherent optimization interference still limits their ability to improve further.

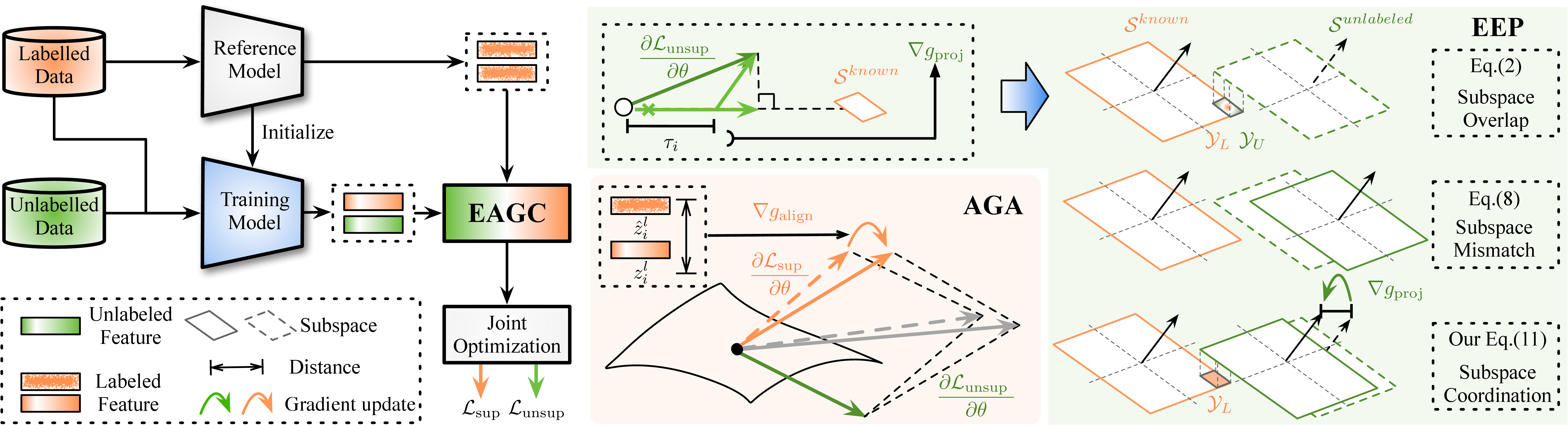

Through quantitative analysis, we identify a key issue, i.e., gradient entanglement, which 1) distorts supervised gradients and weakens discrimination among known classes, and 2) induces representation-subspace overlap between known and novel classes, reducing the separability of novel categories. To address this issue, we propose the Energy-Aware Gradient Coordinator (EAGC), a plug-and-play gradient-level module that explicitly regulates the optimization process. EAGC comprises two components: Anchor-based Gradient Alignment (AGA) and Energy-aware Elastic Projection (EEP). AGA introduces a reference model to anchor the gradient directions of labeled samples, preserving the discriminative structure of known classes against the interference of unlabeled gradients.

EEP softly projects unlabeled gradients onto the complement of the known-class subspace and derives an energy-based coefficient to adaptively scale the projection for each unlabeled sample according to its degree of alignment with the known subspace, thereby reducing subspace overlap without suppressing unlabeled samples that likely belong to known classes.

Experiments show that EAGC consistently boosts existing methods and establishes new state-of-the-art results.

EAGC starts from an optimization view of GCD and identifies two coupled failure modes: gradient direction deviation for labeled samples and subspace overlap between known and novel classes.

AGA uses a supervised reference model as an anchor, stabilizing labeled-sample gradients so known classes preserve a reliable and discriminative optimization trajectory.

EEP softly projects unlabeled gradients away from the known-class subspace with adaptive energy-based weights, improving novel-class separability without over-suppressing unlabeled known samples.

Framework overview of EAGC. AGA stabilizes labeled gradients with a reference anchor, while EEP adaptively projects unlabeled gradients away from the known-class subspace.

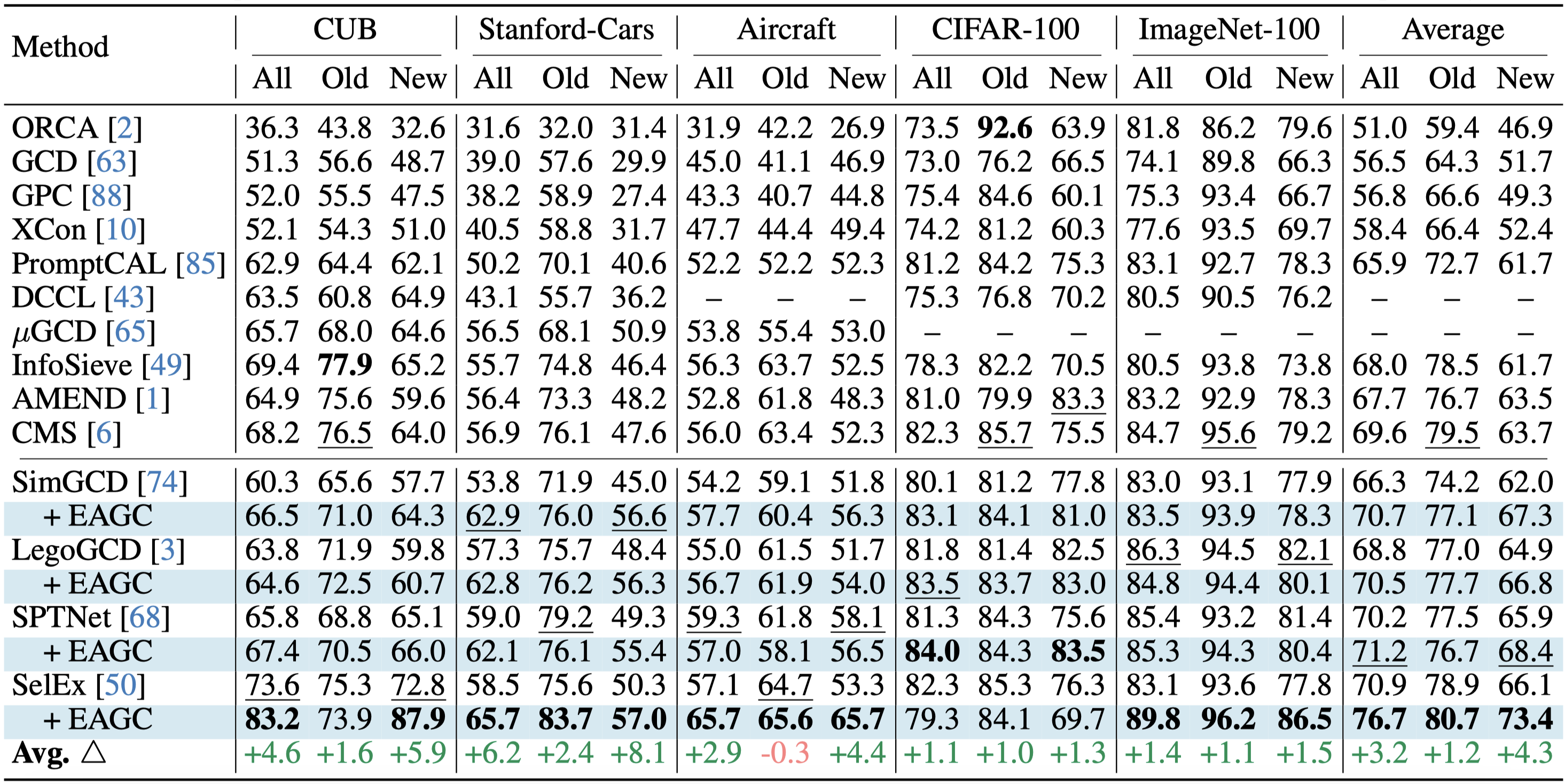

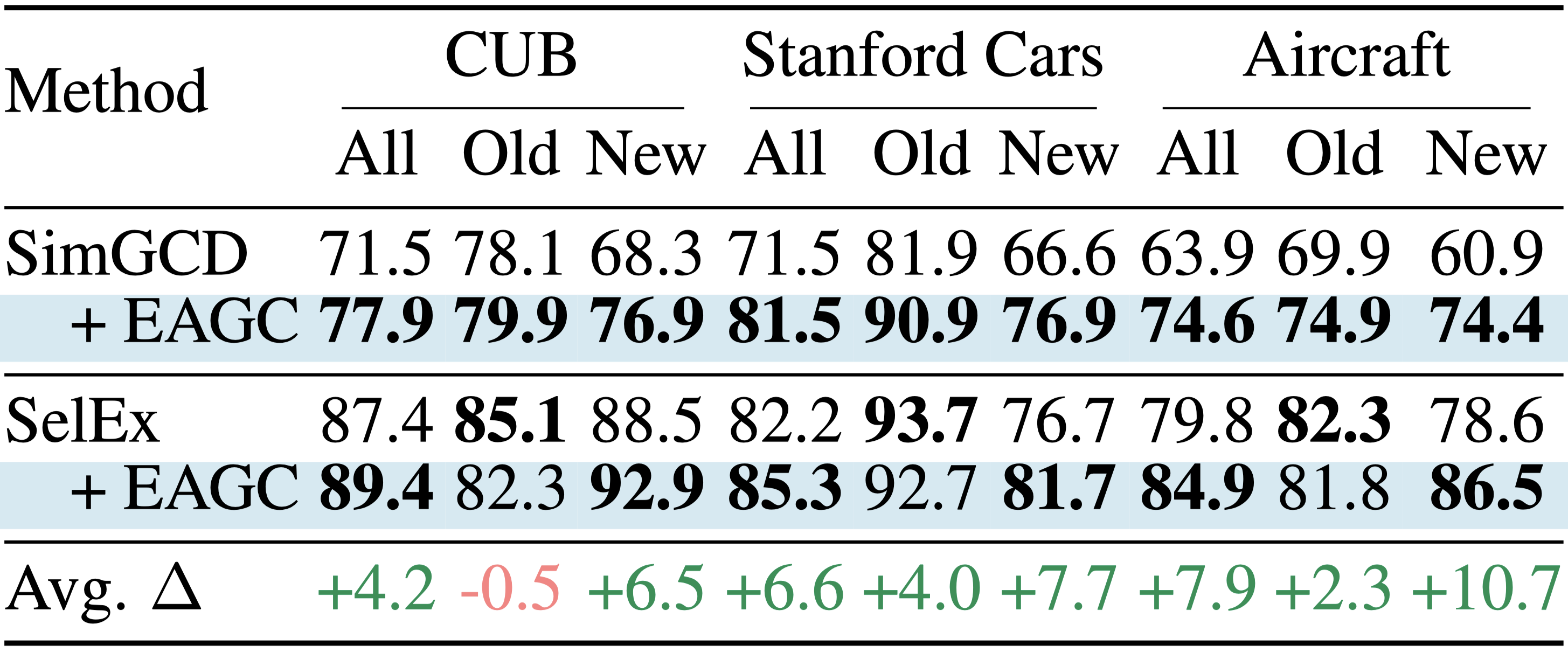

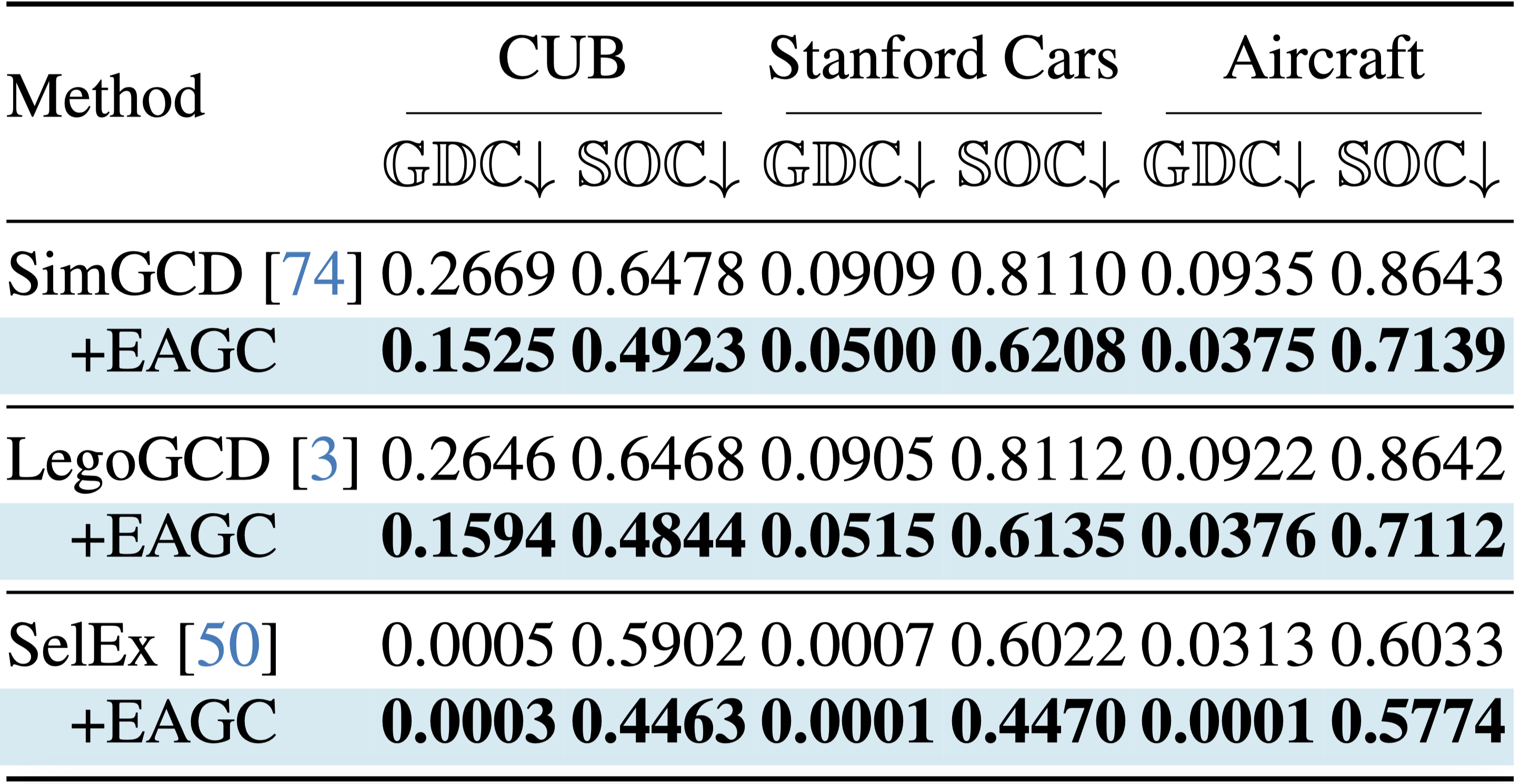

We highlight representative quantitative results and qualitative analyses here. The table regions below are reserved for the final rendered figures, while supplementary visualizations are already included.

Primary comparison table showing the performance gains of EAGC over existing baselines.

Comparison table where the backbone is replaced with DINOv2.

Quantitative diagnosis table measuring gradient deviation and known-novel subspace overlap.

Feature distributions across datasets show that EAGC yields clearer boundaries and stronger cluster separation than the baselines.

Attention maps on CUB indicate that EAGC helps the model focus on more concentrated and fine-grained discriminative regions.

Coming Soon

The poster will be added here after the final design is ready.

@inproceedings{zheng2026devil,

title = {The Devil Is in Gradient Entanglement: Energy-Aware Gradient Coordinator for Robust Generalized Category Discovery},

author = {Zheng, Haiyang and Pu, Nan and Cai, Yaqi and Long, Teng and Li, Wenjing and Sebe, Nicu and Zhong, Zhun},

booktitle = {Proceedings of the Computer Vision and Pattern Recognition Conference},

year = {2026},

url = {https://haiyangzheng.github.io/EAGC/}

}